2026 was supposed to be the “Year of the AI Agent,” where Large Language Models (LLMs) handle everything from corporate research to automated customer support. However, for many organizations, that promise has been stalled by a sobering technical truth: AI bots are data sponges.

Whether your team is summarizing documents in ChatGPT, analyzing datasets in Copilot, or fine-tuning a proprietary model, every prompt poses a risk. If you aren’t careful, your corporate AI won’t just learn your business. It will memorize your sensitive data.

In this guide, we’ll break down why PII leakage is a critical 2026 risk and how to ensure your data is AI-ready so you can safely adopt innovation without slowing your team down.

AI as the New Data Leakage Channel

Most companies have established controls for email security and cloud access, but AI tools introduce completely new, often invisible data flows. And these pose a threat because they bypass traditional security perimeters.

Even the most robust defense strategies now face specific risks, such as:

- Prompt leakage: Employees copy-pasting customer contracts or internal reports into AI tools for quick summaries.

- Uncontrolled API data flow: Internal apps sending massive datasets to external LLM providers without data classification.

- Shadow AI: Teams using unauthorized AI assistants that operate outside of IT visibility and logging.

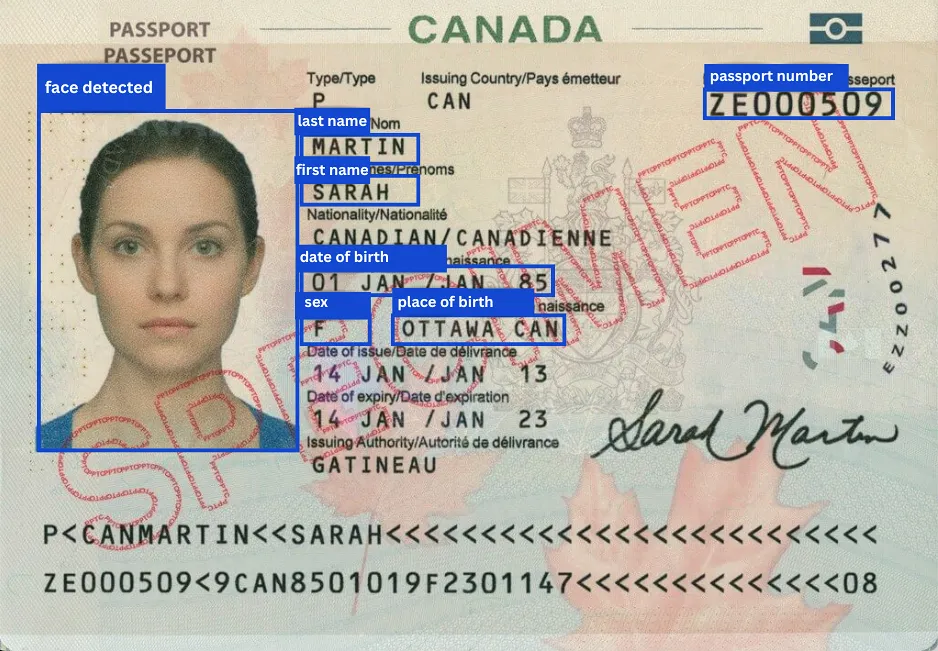

Unlike traditional systems, an LLM doesn’t inherently understand data sensitivity. To an AI model, a passport number is just a string of digits, and a medical report is just context. Without external controls, the model processes and potentially stores everything equally.

AI Compliance Nightmare

In 2026, “we didn’t know employees were using AI like this” is no longer a valid legal defense. Regulations like the EU AI Act, GDPR, and HIPAA emphasize three pillars that AI frequently challenges:

- Data minimization: You should only process the data strictly necessary for the task.

- The right to be forgotten: How do you delete personal data from a model that has already learned from it?

- Purpose limitation: Using personal data for AI training often falls outside the original consent given by the user.

If a model is trained on un-sanitized PII, the resulting “leakage” isn’t just a security flaw. It’s a violation that can trigger fines of up to €35 million or 7% of global turnover.

4 Steps to AI-Safe Data

Instead of blocking AI, which often leads employees to use even less secure “Shadow AI” tools, you must prepare your data for it. The process is straightforward once you implement a dedicated discovery and remediation layer.

1. Identify Sensitive Data Upstream

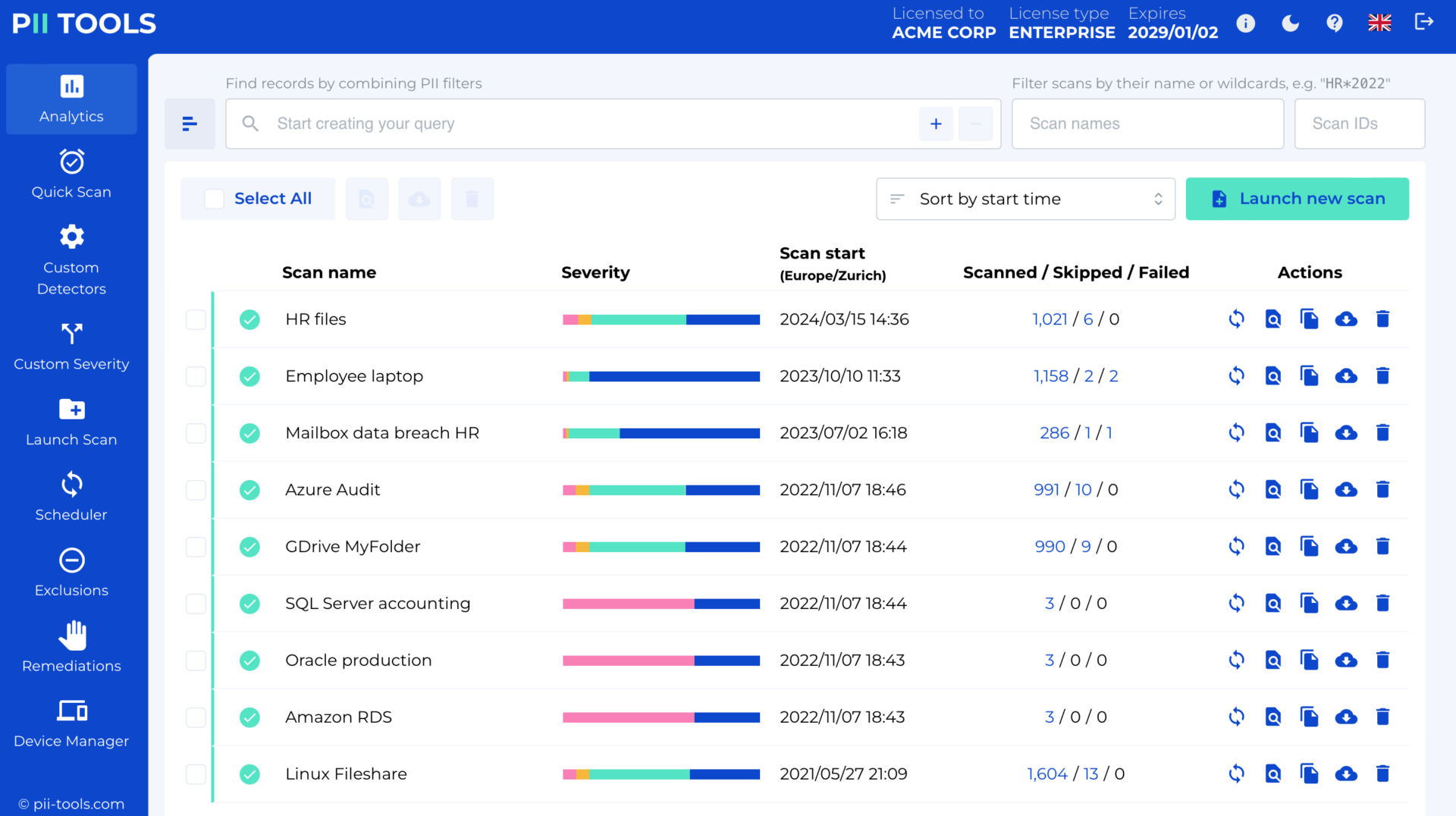

You cannot protect what you cannot see. Success begins with automated PII discovery across all documents, databases, and archives. You must classify data (PII, sensitive PII, confidential) before it reaches the AI supply chain.

2. Sanitize and “De-Risk” Your Data

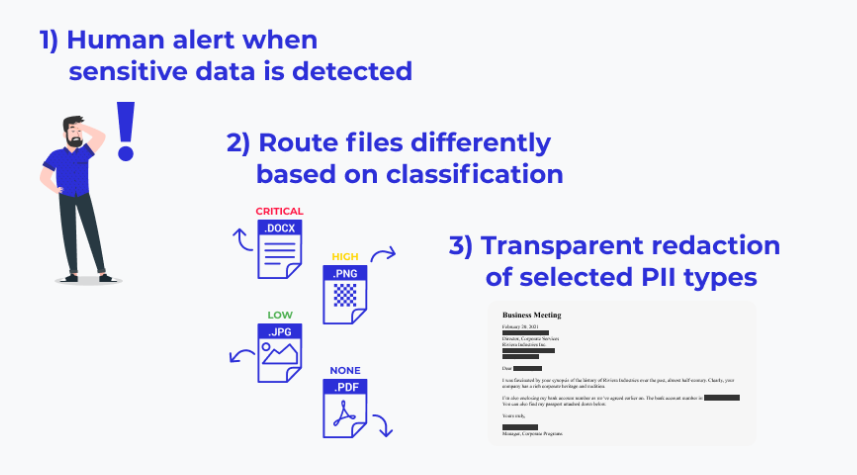

Instead of raw data, feed your AI sanitized versions. This involves:

- Masking: Replacing names or emails with functional placeholders (e.g., [USER_NAME]).

- Redaction: Completely removing sensitive fields that aren’t necessary for the AI’s task.

- Anonymization: Ensuring the data is truly anonymous so it no longer falls under strict privacy regulations.

3. Implement RAG and Prompt Guardrails

If you use Retrieval-Augmented Generation (RAG) to let AI read your company files, you need a real-time filter. This ensures that if an employee asks a question, the AI doesn’t accidentally pull an executive’s salary or a client’s private ID from a nested PDF in your knowledge base.

4. Continuous Monitoring and Auditing

Compliance is never one-and-done. You need real-time visibility into the data being processed and where it’s being sent. Maintaining an audit trail of AI interactions ensures that your guardrails are actually working and allows you to identify new “Shadow AI” trends before they become a liability.

The Smarter Approach: PII Tools AI Data Protector

The era of manual data auditing is over. To manage AI governance at scale, you need a solution that acts as the “Technical Truth” layer for your infrastructure.

The PII Tools AI Data Protector was engineered to bridge the gap between AI innovation and data sovereignty by providing:

- Automatic detection: Scans prompts and datasets for PII in real-time, identifying risks before they are processed.

- Automated remediation: Cleans and prepares data before it ever reaches the LLM, ensuring only “AI-ready” information is shared.

- Sovereign deployment: PII Tools runs entirely on-premise or in your private cloud. This means your sensitive data never leaves your environment during the scanning or remediation process.

This approach transforms PII from a roadblock into a controlled asset, allowing your teams to move fast with AI, without creating compliance exposure.

Don’t Let Privacy Be the Bottleneck to Innovation

You don’t have to choose between AI power and data security. By implementing an automated remediation layer, you can deploy the world’s most advanced LLMs with total confidence.

Don’t just block AI. Prepare your data for it. See how our AI Data Protector works, and get an expert audit to discover how you can safely integrate LLMs into your 2026 workflow.